Do Robots Really Have Emotions? | Can Artificial Intelligence Feel Emotions?

Table Of Interest

Today, you and I will quickly take a look at the topic “Do Robots Really Have Emotions? | Can Artificial Intelligence Feel Emotions?”.

This has become necessary as we have sen overtime that several individuals have been searching for topics related to the above topic Do Robots Really Have Emotions? | Can Artificial Intelligence Feel Emotions?.

However, if you are among those that have been searching for answers to [why cant robots have emotions, robots with human emotions, do robots have emotions, robots emotions, can ai feel emotions, can robots feel, can robots feel pain, robots that can recognise human emotions, Do Robots Really Have Emotions? | Can Artificial Intelligence Feel Emotions?], then you can see that you are not the only one.

Nonetheless, you shall get all this information right here on this blog.

Do Robots Really Have Emotions? | Can Artificial Intelligence Feel Emotions?

Where Do The Emotions Come From?

This relates to one of my favorite technologies of all time : Artificial Intelligence! Actually I think almost all of the disruptive technologies involves AI now such as autonomous car, smart city, flying taxi, even judging support for Olympic Games, etc. Basically, robot is just pieces of metal without AI technology.

Computer scientists created different technologies to form database of emotions and the way their robots process internally and respond externally. For example, roboticists at Tsinghua University created an “emotion classifying” algorithm for a chatbot which can recognize various emotions from more than 20,000 posts on Weibo social media platform.

The system will tag millions of emotional reactions to the main post content, which created massive database for robot to answer questions or express emotions.

If you’ve seen the movie HER, At the beginning “Scarlett Johansson” collected all the data from her “boyfriend” in the past.

And the more he talked to ‘her’ the more he got addicted because she collected more and more information about him during all conversations: his habits, routines, his patterns of reactions, things making him thrilled, things making him pissed-off, etc.

And, she knew how to respond accordingly not only to his actions or words but also to his emotions. She understand him more than himself.

So, I would call it Artificial Emotional Intelligence? I don’t know whether it sounds cool or scary know haha but I want that artificial thing if possible lol!

Do Robots Really Have Feelings ?

It does not mean robots actually feels angry, happy or any other mental states in the same way we do, they are so far just designed to display emotions.

There are many times we say “sorry”, “please” or “thanks” faster than we actually feel grateful or regretful, most of the time, we say those words because we were taught since we were kids that they are used to show the right behaviors, right?

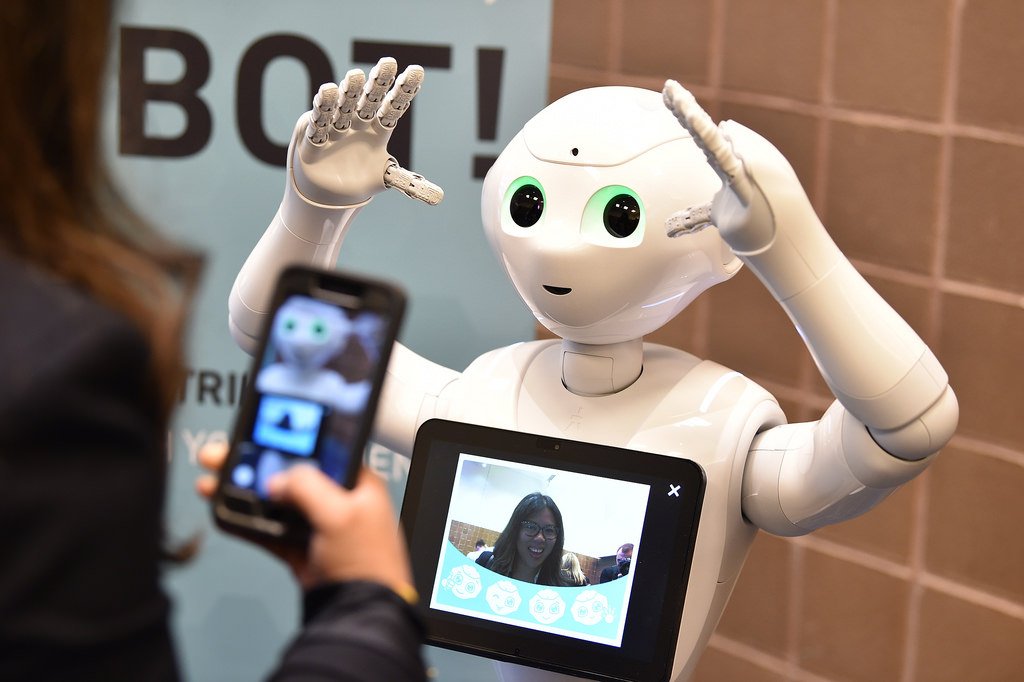

PEPPER is the first robot which was designed to recognize and react to human emotions.

It is made by Japanese scientist and works in stores. Although Pepper can only know and react to 4 basic human emotions which are joy, happiness, anger and sadness by collecting and analyzing data from audios and videos, it can help its store owner to increase sales buy telling funny jokes, chatting and encouraging customers to purchase.

A technology called “lovotics” was developed by Samani – a director at National Taipei University. Lovotics is aimed to connect robotic sciences including artificial intelligence & mechanical engineering and the science behind human love.

The way we feel about others are determined by two sets of hormones working at the same time which are biological and emotional.

So, Samani build an artificial endocrine system for robot based on that theory. Endocrine is the system that contain all glands that produce hormones which influence the way your body work.

Biological hormones govern our heartbeat, appetite or blood pressure. When we feel hungry the level of ghrelin (the hunger hormone) increases.

Similarly, digital ghrelin will make robot “hungry” and want to recharge its battery. Moreover, emotional hormones regulate our emotions. You must have heard of dopamine, serotonin, endorphin or oxytocin – the ‘hormone of love’.

These digital emotional hormones can be activated in the artificial intelligence as well.

The robot will analyze sound, visual and even tactile factors to recognize and categories attitudes of people they are talking to and then it process the right combination of hormones internally in order to exhibit external behaviors.

Can Robots Really Fall In Love ?

Basically, our brains are similar to a computer hardwares – contain many complex parts and receive different information, then process them, create emotions and influence you to take some actions.

Neurons or cells send signals throughout the brain via neurotransmitters, commonly known as serotonin, dopamine, oxytoxin and endorphins.

Serotonin is related to memory and can help to balance your mood and depression.

Dopamine can make you more motivated or concentrated.

Endorphins is known as natural pain-killer, your body release this happy chemical when you exercise.

Oxytocin is popular as the ‘love hormone’ which is released when we hug, kiss or give birth.

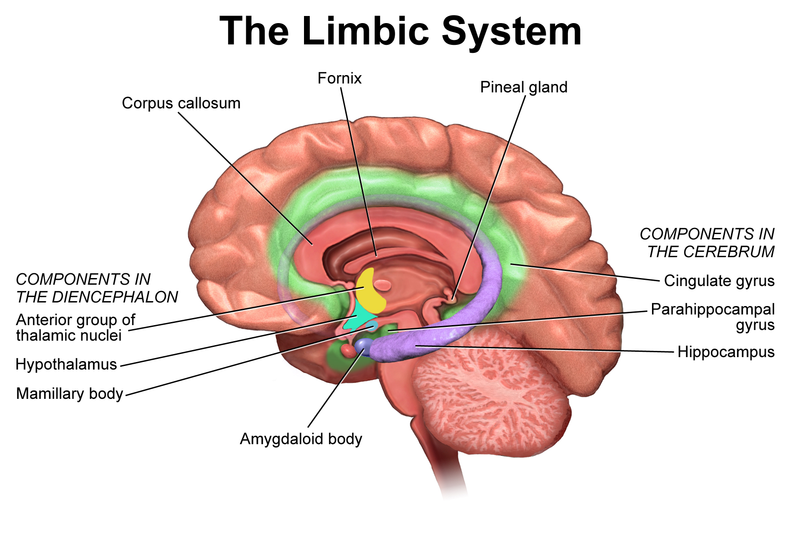

After the signals are transfered, the limbic system (a.k.a “emotion brain”) is responsible for processing information. Different parts in the network will have their own tasks.

For example, the amygdala is associated with fear or danger.

The hypothalamus will regulate your response to emotions physically, such as faster heart beat or your breath speed. And the hippocampus will make you respond to the circumstances based on your memories.

So what if we can develop all these “neurotransmitters” or system for robots?

There are two possibilities when developing emotions for robots, according to Daniel Dennett, a leading AI philosopher.

The most common way is to program them to ACT like they actually feel in love using artificial intelligence.

It’s not authentic, I know … You can not be totally happy when knowing your partner’s emotions are not real, it sounds like cheating, yes.

The another way is to create an emotional system which operates like human brain.

It is same as babies when they start to have consciousness about things around them and learn the emotional words to express how they feel inside.

They learn that ‘happy’ or ‘sad’ are used for a certain psychological feelings associated with certain expressions, for example, tone, facial or body movements. Simply put, the whole idea is to let robots experience the emotions before teaching them how to express those feelings.

NAO is the first robot in the world that can develop, show emotions and even interact with people as a one-year-old child.

He is able to create bonds with people by understanding and reacting to peoples’ emotions via their physical signals such as movements of the body. NAO uses video cameras to detect and recognize those movements and then the neural network brain allows him to memorize interactions with different people.

Those understanding combined with recognition about the environment conditions will define NAO’s emotions (sad, happy or frightened). His reactions of the emotions are pre-developed but NAO will ‘decide himself’ when to show them.

I’m still not sure I’m comfortable with the whole idea of dating robots .. but if robots can help people by becoming good babysitters or caregivers – so why not a date? Human beings are struggling with loneliness anyway.

That’s the much we can take on the topic “Do Robots Really Have Emotions? | Can Artificial Intelligence Feel Emotions?”.

Thanks For Reading

Leave a Reply